EC2 DFIR Workshop

Module Overview: File System Forensics - Part 3

- Identify Evidence of Persistence

- Check for Suspicious Files

- Review System Logs & Configs

- Perform a Timeline Analysis

Persistence – cron Jobs

To survive a reboot, malware must use a persistence mechanism. The two most common methods are cron jobs and start-up scripts

The SANS Linux Intrusion Discovery Cheat Sheet provides the following two suggestions for looking at system-wide cron jobs:

cat /etc/crontabls /etc/cron.*`

Be sure to check the cron jobs for all users.

Persistence – Startup Scripts

Some malware will make use of the start-up scripts that Linux runs at boot time when entering a specific run level.

On some Linux distributions, these are found in /etc/init.d, but on Amazon Linux

and Red Hat variants, the scripts will be in /etc/rc*.d.

Cron jobs and the start-up scripts may have innocuous names and may call other scripts, so sometimes an investigator may have to dig into the details to determine its true nature.

Suspicious Files

There are certain locations and characteristics to look for when performing a manual search for suspicious files.

Examples:

- Unfamiliar files in the /tmp directory

- Unusual SUID files (explained on next slide)

- Very large files

- Unfamiliar encrypted files

SUID

SUID = Set owner User ID

Normal programs execute with the permissions of the logged in user who executed the program

SUID grants a temporary permission to the user who executed the program to run it with the permissions of the owner

SUID can be used to grant a script permissions to run as root from lower privileged accounts and can be a sneaky way to maintain root access

In Linux, certain executables need SUID, so it is helpful to compare with a baseline volume

High Entropy Files

- Entropy is an indicator of randomness

- Files with strong encryption appear to contain random data because detectable patterns would enable a cryptanalyst to break the encryption

- Compression is a technique that replaces long patterns with a short pattern, therefore compressed files also exhibit high entropy.

- Obviously, encrypted files cannot be compressed because they should not have any observable patterns.

- Packing an executable uses compression and encryption techniques to make a file smaller and/or hide its internal code

- Searching for high entropy files may allow the analyst to find suspicious files that are encrypted

- Linux makes extensive use of compression so there may be many false positives

densityscout

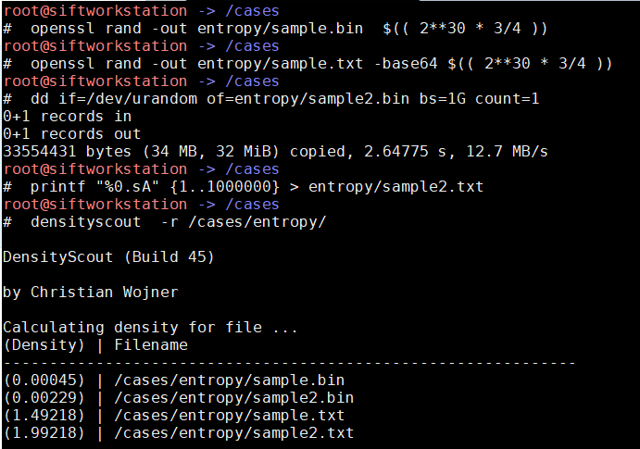

Image 17 shows the use of a utility on the SIFT Workstation called ‘densityscout’ working on four different files created in the screenshot:

- sample.bin – a random file created with openssl

- sample2.bin – a random file created with /dev/urandom

- sample.txt – a random file with base64 encoding

- sample2.txt –a file with a million “A” characters

The important lesson is to make sure that any limitations of a tool are known, and that you understand exactly what the tool is telling you.

System Logs & Configuration Files

Ideally, the critical logs were offloaded to a central repository such as Splunk or a S3 bucket.

Regardless, an examination of the logs on the EBS volume is an important activity because system logs are a valuable source of information regarding the state of the system before and after the attack and may provide answers to how and when the system was compromised.

Logs & Files with Forensic Relevance

- Bash History

- /etc/password

- /etc/group

- Boot History

- Yum Log

- /var/log/*

- Web Server Logs

About bash History

Although the bash history is not a robust audit log, it still provides information that may be of interest to the examiner.

For example, it can reveal skill level and stylistic proclivities unique to a certain hacker in addition its intended purpose of capturing the recent commands entered by a user.

A user can modify the bash history associated with his or her account, but rarely will do so unless trying to cover the tracks.

Because there are multiple ways to avoid bash history logging it should not be considered a security control. Indications that bash history has been altered or evaded is noteworthy.

Other System Logs

The default location for all logs is /var/log and all logs should be perused to

identify information that may be relevant to the specific investigation. The

/var/log/secure file contains details about user account changes, ssh connections,

sudo activity, and su sessions.

By default, Amazon Linux has the auditd service running and the considerable amount

of valuable information that it has logged will be found in /var/log/audit/*

Timeline Analysis

A timeline is an indispensable tool that can unify the investigation effort. The process of creating it can help identify gaps and missing information. A summarized timeline can help to effectively communicate the sequence of events to management.

Two Types of Timelines

- File System Timeline – The MAC times for allocated and unallocated files are listed in chronological order.

- Super Timeline – Includes many system logs in addition to the file system activity.

File System Timelines

Two tools from the Sleuth Kit are used:

- fls – A utility that lists the files and directories on a disk image

- mactime – A utility that creates a timeline of file activity based on the modify, access, create and change/birth timestamps

The mactime command rearranges this metadata in temporal order, creating multiple records when the MACB timestamps on a given file are different

MACB Timestamps:

DANGER: Make sure that you fully understand what the MACB timestamps are telling you! See this article to learn more about the nuances of Linux timestamps.

Super Timelines

Super Timelines are created and manipulated by the Plaso suite of tools, that include:

- Log2timeline – a command line tool to extract events from individual files, creating a plaso storage file. Plaso files are designed to be efficient from a storage and performance perspective.

- pinfo – a command line tool that provides information about the plaso storage file.

- psort – a command line tool to post-process plaso storage files. It allows you to sort and filter plaso files.

The psort.py tool is used to create a CSV from the plaso file, however an unfiltered CSV file is often too large to analyze with Microsoft Excel. Therefore, the standard process is to create a CSV between two dates that encompass the events of interest to the investigation.